Actively Understanding the Dynamics and Risks of the Threat Intelligence Ecosystem

Published:

Following the recent acceptance of our paper “Actively Understanding the Dynamics and Risks of the Threat Intelligence Ecosystem” to the Network and Distributed System Security (NDSS) Symposium 2026, this blog serves to summarize my contribution to this research project, and describes at a high level our methodology and results. This represents two years of work with collaborators at Georgia Tech: Tillson Galloway, Omar Alrawi, Thanos Avgetidis, Manos Antonakakis, and my advisor Fabian Monrose.

First, I summarize the methodology, findings, and implications for the threat intelligence ecosystem. Then, I discuss my contributions to the project, as well as the design choices in more detail than the publication.

Our paper and slides can be found on the NDSS website.

Project Summary

Background and Motivation

The cybersecurity industry and community relies heavily on collecting and sharing threat intelligence (TI). Security vendors and other defenders analyze artifacts and produce indicators such as IP addresses, domains, and file signatures to detect and respond to threats. Yet despite the scale of the TI ecosystem, it remains largely a black box; it is inherently difficult to investigate the sharing patterns and tendencies of security vendors. We know little about how security vendors triage artifacts, collect indicators of compromise (IoCs), and disseminate this information. Vendors themselves know little about how their intelligence is being used and what threat vectors may exist in their analysis.

In our work, we set out to measure the TI ecosystem end-to-end, from initial artifact submission to disruption of threats. Our approach reveals not only what vendors participate, but also how quickly vendors act, the depth of their analysis, and the threat vectors of this ecosystem.

Research Questions

The end goal of our research is threefold, and is centered around answering the following research questions (RQs):

RQ1 (Propagation): How do security vendors differ in their ability to analyze malware and share extracted indicators of compromise (IoCs) across the ecosystem?

RQ2 (Disruption): How do differences in analysis and sharing affect the speed and effectiveness with which vendors block IoCs or take down (or suspend) infrastructure?

RQ3 (Evasion): How are adversaries exploiting gaps in analysis, sharing, or disruption, and what strategies can improve the ecosystem’s resilience against such evasions?

Measurement Pipeline

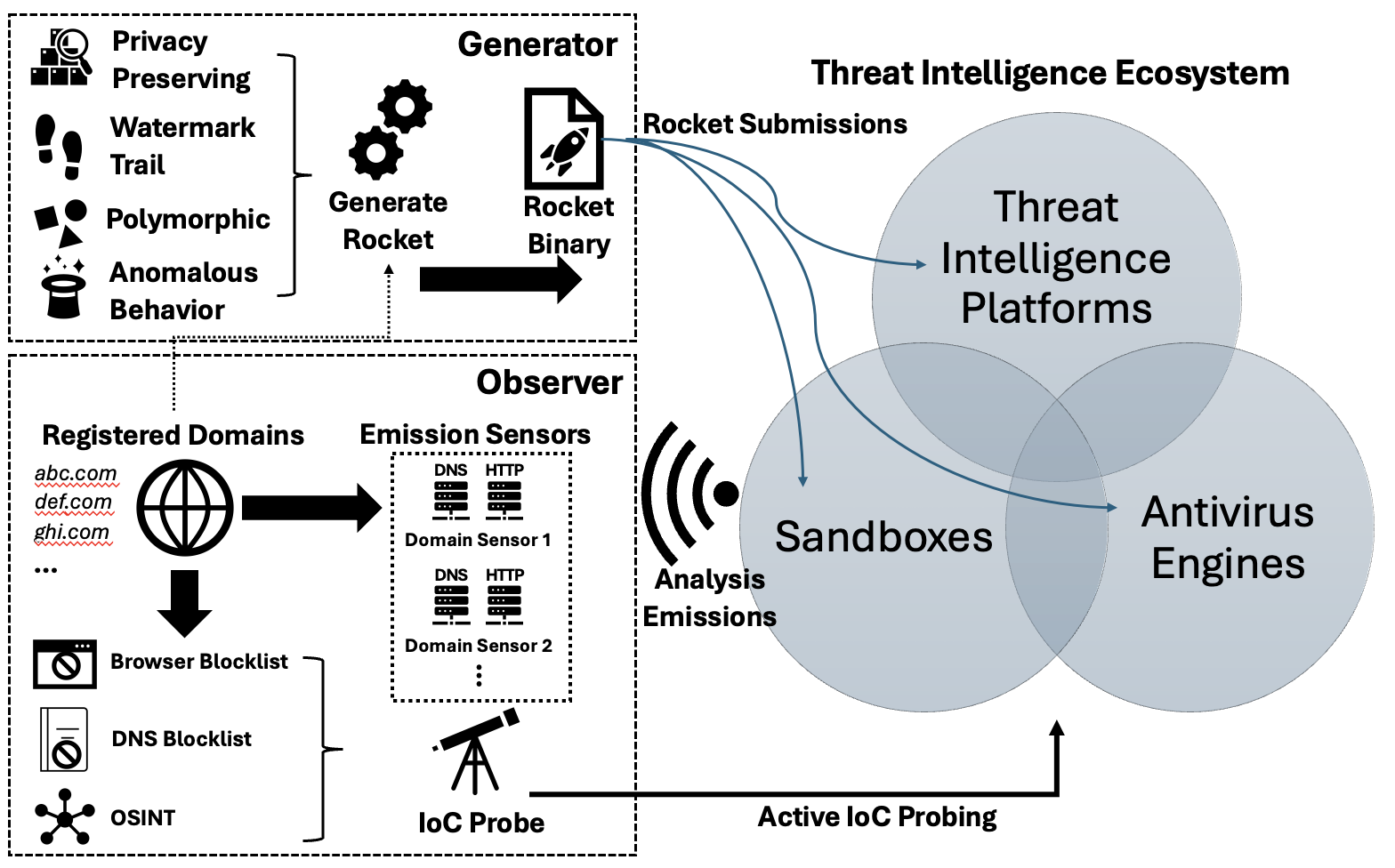

To penetrate this black box, our key idea was to devise malware as probes in order to map the TI ecosystem supply chain. To do this, we devised a measurement pipeline to answer these questions. The goal of this pipeline is to track the binaries as they traverse the ecosystem from submission to execution(s) to disruption; this is done by deploying a set of observers monitoring for watermarked emissions. The pipeline is shown below:

The Generator produces a binary (“Rocket”) which is submitted to a set of TI platforms, sandboxes, and antivirus engines; each binary is unique to each submission. The binary is a defanged malware that is intended to trigger a malicious verdict and subsequent execution. Upon execution the Rocket collects a set of information about the sandbox environment, encodes the information in an HTTP request to a controlled domain, and drops a modified copy of itself with an updated provenance trail.

The Observer consists of emission sensors, a DNS authority and HTTP server, that monitors for requests produced by the Generator, which indicates execution. Identifiers in the request are uniquely mapped to submissions to track emissions with high confidence. The Observer also consists of IoC probes which actively track whether domains, IPs, and artifact hashes appear in blocklists (Google Safe Browsing, Quad9, VirusTotal, AlienVault OTX, commercial feeds).

This design allows us to observe each stage of the IoC propagation chain, which we can intuitively consider as an IoC lifecycle. We can either derive information or receive indicators at 1) submission; 2) first execution (sandbox); 3) sharing and each subsequent execution, and location of execution; 4) when IoCs were put into action to disrupt threats (blocking); 5) domain suspension.

Methodology and Scale

We submitted unique Rockets to 30 vendors across three categories:

- 10 Antivirus vendors (e.g., Kaspersky, Sophos, Avira)

- 10 Malware sandbox services (e.g., Hybrid Analysis, Any.Run, Intezer)

- 10 TI platforms (e.g., VirusTotal, MalwareBazaar, AlienVault OTX)

We registered 9 domains, assigning each domain to a category type and carrying out multiple experiments. The domains were chosen such that no prior registrations were found in DNS zonefiles to avoid experiment contamination. Each Rocket was unique to the vendor and manually submitted to each vendor for an experiment.

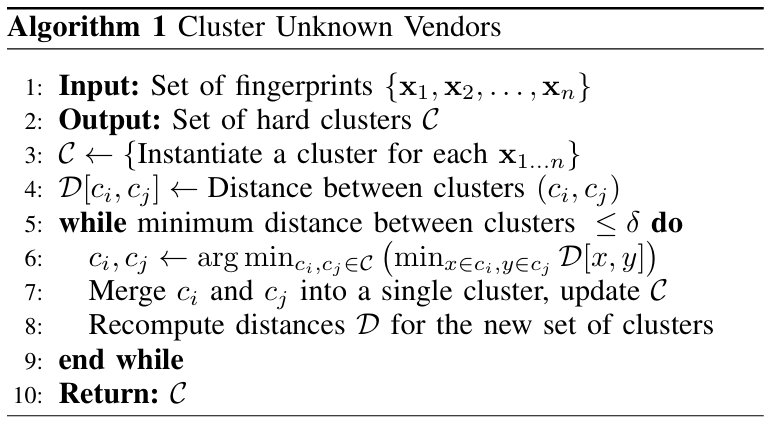

To label vendors, we use data exfiltrated from the sandbox. The first execution of a Rocket is attributed to the submission vendor, while later executions can be attributed by clustering similar sandbox environments with relatively high certainty. This resulted in 62 labeled clusters, 19 of which were known from first submission and 43 generated via clustering.

Key Findings

Despite extraction being common, sharing of TI and action is rare. 20/30 submitted Rockets were executed, but only 5 shared the extracted TI. Even more, only 2 vendors contributed to downstream domain takedowns. Hence, there is a big gap between IoC extraction and actionable intelligence dissemination.

“Nexus” vendors may create single points of failure. We label 4 vendors as “nexus” vendors, which have high in- and out-degree in the TI graph; they both consume and share TI.

Adversaries actively exploit sandbox fingerprints. Using VirusTotal Retrohunt, we identified 874 malware samples uploaded within a 90-day window (March-June 2025) containing sandbox-specific IPs from our experiments. Two popular open-source stealer families, such as this one (GitHub) dynamically download IP blocklists from GitHub to detect and evade sandboxes. Simulating evasion using public blocklists showed a 25% reduction in vendors receiving extracted TI.

Network IoCs are reshared far more frequently than binaries. While this is naturally more efficient, it means that some vendors lose out on information that may otherwise be contextually relevant. In some cases, domain lookups were up to 20x more prevalent than binary executions.

Sharing delays propagate downstream. Although IoCs are typically extracted within minutes, sharing delays of hours to days propagate across the supply chain and lifecycle. In case studies, domains were blocked by DNS firewalls within 1-13 hours but takedowns took 8-11 days. This leaves a wide exploitation window for adversaries.

Recommendations

In our paper, we provide recommendations to improve ecosystem resilience.

For vendors:

- Diversify sandbox fingerprints across IP ranges, system configurations, and ASNs. While operationally expensive, adversaries actively take advantage of patterns across sandboxes.

- Implement recursive analysis of dropped files and packed files, which are increasingly common.

- Reduce sharing delays to reduce delay in TI lifecycle.

- Monitor for sandbox fingerprint abuse using detection signatures.

For operators:

- Assess TI feed sources to understand upstream TI ecosystem dependencies and avoid illusion of consensus.

- Prioritize feeds that provide full binaries and behavioral context, not just decontextualized IoCs.

- Consider the risks of uploading binaries to public scanning services. IoCs can be used as honeytokens to reveal breaches of confidentiality or incident response auditing.

For researchers:

- Consider ethical implications of your research. Avoid human deception, implement opt-out, etc.

- Account for aggressive IoC scanning during experiments and anticipate large-scale data cleanup.

- Account for vendor dependencies when evaluating TI feeds or training ML models.

My Contributions

While this research was only recently published, I began work on this project in mid-2023. Most of my work focused on the design decisions of the Rocket malware, while my co-authors did much of the data analysis.

Malware Design

The core purpose of the Rocket design is to build a probing binary that vendors will dynamically analyze and run, and have it leave a provenance trail to trace where the binary propagates across the TI ecosystem. This is easier said than done, because competing constraints may have to be simultaneously satisfied. For instance, the binary must be malicious enough to trigger dynamic execution consistently, while being defanged for ethical considerations. It must exfiltrate environment information from the sandbox, but must do so in a privacy-preserving manner.

Inducing Dynamic Execution and a Malicious Verdict

Not all binaries submitted to a vendor will be executed; some will be processed statically. However, the experiments require that Rockets consistently trigger dynamic analysis, so the binary needs to exhibit behaviors that static analysis deems suspicious enough to proceed to dynamic analysis.

To do this, we use known rules to induce a malicious verdict during static triaging. We decided to use a combination of malicious YARA signatures (byte sequences, strings) and other techniques to consistently produce malicious verdicts in a subscription-based private sandbox.

Assuming the binary proceeds to dynamic analysis, we also need to ensure that the sandbox produces a malicious verdict to encourage downstream sharing of IoCs. Verdicts are decided in sandboxes through a variety of rules and behaviors: creating processes or files, network activity, API call sequences (often done through hooking user-mode APIs) and monitoring syscalls, or evasion detection. Conveniently, much of the behavior needed for our measurement (environment fingerprinting, file writes/dropping Satellites, and network emissions) already contributes to suspicion. Importantly, we use a defanged keylogging behavior (which makes desired API calls) in our construction to reinforce a malicious verdict.

Tracking Binary History and Creating a Provenance Trail

During execution, the binary collects and exfiltrates environment information by querying a lab-controlled HTTP server (“emission”). However, this profiling process is not enough to track where the IoCs have been spread, nor who has executed the binary; we can only associate the submission to a vendor to an initial execution. Hence, the key idea is to use a type of provenance tracking in our profiling approach. During profiling, the binary embeds information about the current environment into itself (the “provenance trail”), and during the emission phase, the entire provenance trail is exfiltrated.

To implement this, we must first discuss data exfiltration to understand the restrictions. As mentioned earlier, we use a DNS authority and HTTP server that monitors for requests produced. To share the fingerprint with the Observer, the Generator uses a simple HTTP request and encodes the provenance trail in the fully qualified domain name (FQDN).

This leads to an interesting problem. FQDNs can be 253 characters long, but a given subdomain label can be just 63 characters. Domain names are also case-insensitive, so our encoding strategy limits labels to [a-z0-9], 36 characters. Furthermore, a DNS label must be separated by a ., so 4 characters are lost on label separation. Hence we are upper bounded at \(249\log_{2}36\approx 1287\) bits of information to encode the provenance trail. Certainly, this is not enough to encode long strings or IoCs to perfectly uniquely identify each submission. Note that the provenance trail must also be encoded in these 1300 bits of information, so the FQDN becomes even more restrictive.

Fingerprinting Sandboxes

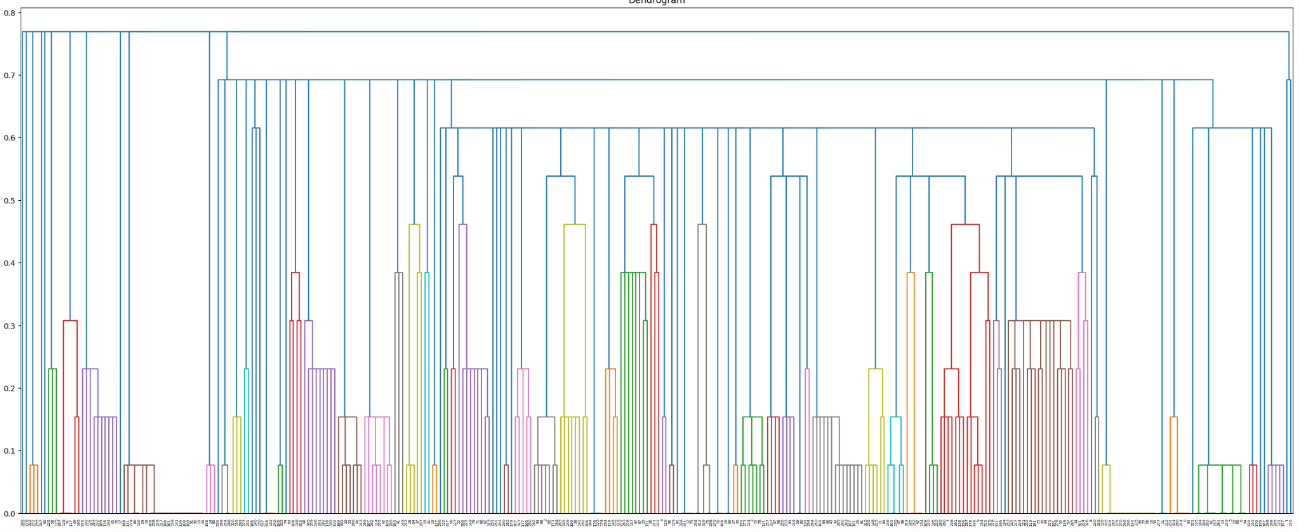

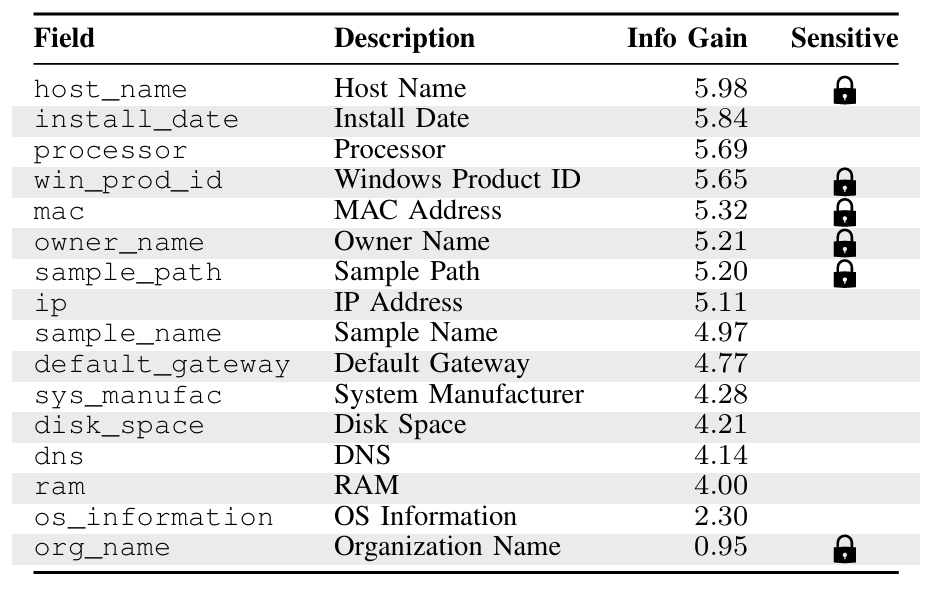

The fingerprints generated by the binary during execution were based on a pilot experiment I first ran in late 2023 to validate prior work, such as SandPrint: Fingerprinting Malware Sandboxes to Provide Intelligence for Sandbox Evasion. For this pilot, we profiled sandboxes using 16 features, and performed single-link hierarchical clustering to group sandboxes by vendor. Then, we chose a subset of features that maximizes mutual information \(I(F_1;C)=H(F_1)+H(C)-H(F_1,C)\).

We can visualize the clustering using a dendrogram below; each color shows a different cluster.

The table below displays information gain for each field, which helps with intuition, but is not perfectly representative of the information gain onto a joint variable.

I computed all \(2^{16}\) subsets and ultimately settled on install_date, ram, and sys_manufac as our set of exfiltrated features to maximize mutual information while being privacy preserving. We can validate by comparing the clustering with the feature subset to the ground truth (clustering with all 16 features); in over 99% of pairs of executions, the same labeling is achieved. Notably, no significant increase in similarity was found when using a larger subset of features.

This algorithm describing single-link hierarchical clustering can be thought of as an implementation of a union-find data structure, in which we form clusters by combining observations until a threshold of features is reached.

Thus, we can generate a hashed fingerprint based on these three features, which we call \(H_i\). It turns out this is not yet sufficient, as a single vendor may execute the same binary multiple times across identical sandbox environments, producing indistinguishable fingerprints. To disambiguate, each execution also generates a random execution ID (\(\epsilon_i\)) that uniquely identifies each individual run. The combination of the system fingerprint and execution ID allows us to distinguish repeat executions within the same vendor from executions across different vendors, which is important for accurately reconstructing the provenance trail.

Furthermore, we generate a unique binary ID \(b\) for each of 30 submissions, grouped in 10 by vendor type. Each group is assigned a domain, and each binary ID is assigned a letter between A-I.

Checksums can also be implemented in the provenance trail. Background noise/fuzzing can be detected by our observers, and it is important to separate real observations with fuzzers. I initially implemented a CRC error detection algorithm in our data analysis pipeline, but this turns out to be largely unnecessary since errors can be detected by malformed provenance trails.

Finally, we create the provenance trail with the following construction:

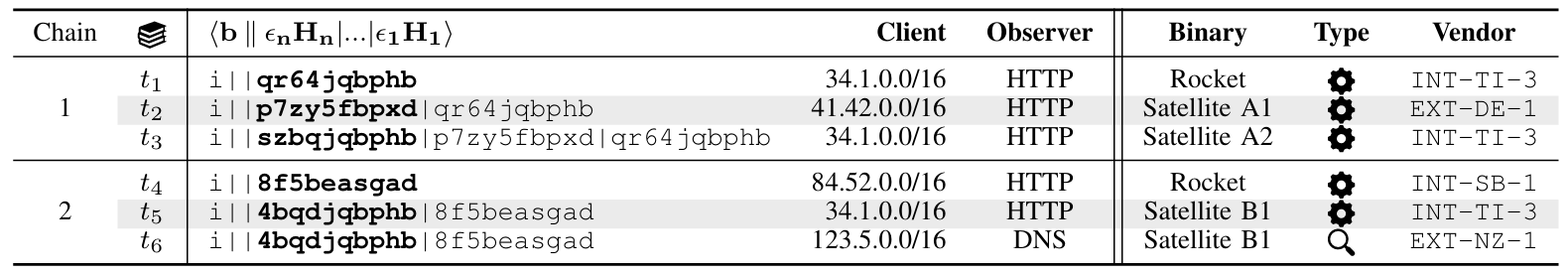

\[\langle b\mid\mid\epsilon_nH_i\mid\mid\dots\mid\mid\epsilon_1H_1\rangle\]Each execution appends then exfiltrates the entire provenance trail, allowing us to see the entire history of where the binary has been shared. Importantly, if history diverges at a point, we can observe this fact when the provenance trail is forked. If the dropped Satellite binary is also executed, we can see this when two executions are made from a similar sandbox.

Consider these log entries for examples:

There are many deductions we can automatically make from this.

In chain 1, the Rocket is first executed by INT-TI-3 (\(t_1\)). The resulting Satellite A1 is then executed by EXT-DE-1 (\(t_2\)), whose provenance trail contains INT-TI-3’s execution ID, meaning EXT-DE-1 received the Satellite from INT-TI-3. Next, Satellite A2 is executed by INT-TI-3 again (\(t_3\)), with a provenance trail containing both prior execution IDs. This implies EXT-DE-1 shared the Satellite back to INT-TI-3, revealing a cyclic sharing relationship between the two vendors. Note furthermore that the fingerprint (not the execution ID) is the same in \(t_1\) and \(t_3\).

In Chain 2, the Rocket is first executed by INT-SB-1 (\(t_4\)). Its Satellite B1 is then executed by INT-TI-3 (\(t_5\)) via HTTP, and later the same Satellite’s domain is probed by EXT-NZ-1 (\(t_6\)) via DNS only. The DNS-only probe at \(t_6\) indicates that INT-TI-3 shared the domain IoC with EXT-NZ-1, rather than the binary itself; notably this differentiates binary sharing and IoC sharing.

Rocket/Satellite Implementation

The binary itself was written in Go, for a couple of reasons:

- Cross-compilation ability

- Single binary deployment, so no DLLs needed and simplifies Rocket/Satellite dropping mechanism

The main implementation challenge is the Satellite dropping mechanism. Recall that upon execution of a Rocket, it must drop a modified copy of itself (Satellite) with an updated provenance trail.

To do this, we reserve a fixed region in the compiled binary as effectively a buffer for the provenance trail, which has a max length. During execution, the Rocket drops a Satellite, finds the buffer, and overwrites the buffer with a new provenance trail. Thus, the in-place modification only changes a small portion of memory. See this portion of the early version of the code:

srcFile, err := os.Open(os.Args[0])

if err != nil {

fmt.Println(err)

}

defer srcFile.Close()

destFile, err := os.Create(newFilename)

if err != nil {

fmt.Println(err)

}

defer destFile.Close()

_, err = io.Copy(destFile, srcFile)

if err != nil {

fmt.Println(err)

}

content, err := ioutil.ReadFile(newFilename)

if err != nil {

fmt.Println(err)

}

r := regexp.MustCompile("<<<<.{256}>>>>")

match := r.FindStringIndex(string(content))

matchStr := content[match[0]:match[1]]

replacedContent := bytes.Replace(content, matchStr, []byte(newHistory), 1)

err = ioutil.WriteFile(newFilename, replacedContent, 0644)

if err != nil {

fmt.Println(err)

}

destFile.Close()

// delete itself when done

cmd := exec.Command("cmd.exe", "/c", "del " + os.Args[0])

cmd.Start()

// if copy flag is true, replace itself

if copy == "true" {

err = os.Rename(newFilename, "temp.exe")

if err != nil {

fmt.Printf("Error renaming the file: %v\n", err)

return

}

cmd2 := exec.Command("cmd.exe", "/c", "move temp.exe", os.Args[0])

cmd2.Start()

}

In one experiment to look at sandbox evasion, we pack the rocket with the UPX packing algorithm. We also encrypt the main sandbox profiler with AES, where an “outer profiler” decrypts the Rocket:

//go:embed innerprofiler.enc

var encFile string

var basename string

func main() {

key := []byte{0x2b, 0x32, 0x2c, 0xad, 0x83, 0xeb, 0xc4, 0x31, 0xd1, 0xee, 0xe3, 0x86, 0x8e, 0x48, 0xbc, 0x4f}

iv := []byte{0xfe, 0xe2, 0xb9, 0x2c, 0xf5, 0xb8, 0xb2, 0x60, 0xe2, 0x92, 0x96, 0x68, 0xc0, 0x99, 0xf1, 0x9d}

encFile2, err := hex.DecodeString(encFile)

if err != nil {

fmt.Println("Error decoding hex string:", err)

return

}

block, err := aes.NewCipher(key)

if err != nil {

fmt.Println("Error creating cipher block:", err)

return

}

mode := cipher.NewCBCDecrypter(block, iv)

decryptedData := make([]byte, len(encFile2))

mode.CryptBlocks(decryptedData, encFile2)

paddingLength := int(decryptedData[len(decryptedData)-1])

decryptedData = decryptedData[:len(decryptedData)-paddingLength]

outputFilename := basename

err = ioutil.WriteFile(outputFilename, decryptedData, 0644)

if err != nil {

fmt.Println("Error writing decrypted data to file:", err)

return

}

}

This experiment (Experiment II in the paper) tests whether vendors recursively analyze dropped files, which has consequences in ecosystem extraction and analysis depth. Our results show that packed Rockets were executed 35% less often than an unpacked Rocket (Experiment I), despite having more AV detections on VirusTotal. This suggests that vendors are not properly unpacking and analyzing dropped payloads.

In another unpublished experiment, we hypothesized that fuzzy hashing (such as ssdeep) would indicate that many similar samples of our Rocket have been previously submitted, impacting detection rates and sharing behavior. To combat this, we tried two things:

- Compile-time mutations. We experimented with introducing natural variation in the binary. This can be done through inserting dead code, reordering functions, or substituting equivalent code. However, this alone was insufficient to defeat fuzzy hashing, since ssdeep operates on rolling hashes over byte sequences rather than the full file hash, and the similarity score is still high.

- Embedding random byte regions. Similarity is computed over fixed-size blocks of the binary. We can embed large strings of random bytes into each Rocket at compile time to dilute the proportion of shared content between builds. This worked, but has the side effect of bloating the binary.

Observer Design

I also contributed to the Observer’s IoC probing mechanism. Recall that to answer RQ2 (disruption), we need to track when and how vendors act on the intelligence they extract, such as when domains are blocked by DNS resolvers, OSINT blocklists, or suspended by registrars. To do this, we implement a set of cronjobs every couple hours that probe external services for the presence of our IoCs. These probes check:

- DNS blocklists, such as Quad9, Google DNS, Cloudflare, or Palo Alto DNS Security. This is done by issuing DNS queries through each resolver and checking for responses that indicate blocking.

- OSINT feeds: Whether our domains, IPs, or file hashes appear in Google Safe Browsing, FireHOL, VirusTotal, or AlienVault OTX. For VirusTotal and OTX, we query their APIs for detection counts and AV labels.

- Domain suspension: Whether the registrar has suspended or taken down our domains, detected by checking DNS resolution.

We can log the timestamp and the result to reconstruct a timeline to see when each IoC transitioned from active to blocked to suspended. Combined with the emission logs from the Generator, this gives us the full lifecycle of the IoC.

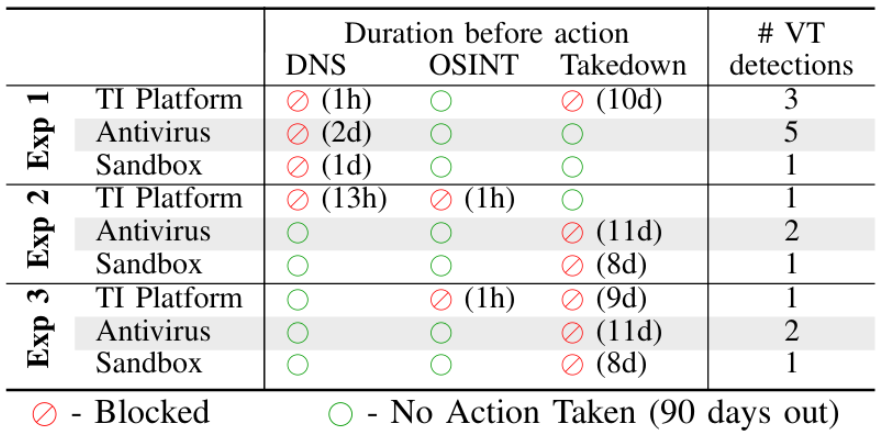

We can observe results in the table from our paper below:

Notably, we see that while commercial DNS blocking could happen within an hour of sharing, domain suspension took 8-11 days on average, and in many cases never happened at all within our 90-day observation window.

Miscellaneous

Ethical Considerations and Response

Actively measuring security infrastructure naturally raises ethical concerns. Since our binaries are submitted to real vendor pipelines, it consumes real compute resources and generates real IoCs that could affect vendors downstream. We adopted certain safeguards to minimize harm:

- Satellite dropping was limited to a depth of 5. This avoids accidentally DoSing vendors in a cyclic sharing relationship.

- We implement opt-out. A disclaimer and opt-out link are in the binary itself and printed upon execution.

- We implement privacy-preserving fingerprinting. All sandbox features are hashed before transmission, and features that could expose sensitive information about a vendor’s environment were deliberately excluded.

We reached out to all 30 vendors we studied to inform them of our studies and coordinate public disclosure. Despite this, only 17 vendors responded, many appreciative of the detailed analysis. Interestingly, some did not take the study as a vulnerability at all, instead opting to label their behavior as a design decision.

Being Found in Another Paper lol

In a weird coincidence, we see that our experiments were detected by Palo Alto Networks in their 2025 IEEE paper “Resolution Without Dissent: In-Path Per-Query Sanitization to Defeat Surreptitious Communication Over DNS”:

There are 104 cases where we cannot decide the purpose of queries at a confident determination. The FQDNs of these cases look like tunneling and there is no useful information on the Internet. For example, the domain 9mn[.]lat has a number of tunneling-like FQDNs, e.g., dpdf3d[…redacted…]skpx.9mn[.]lat and dc81[…redacted…]cof.9mn[.]lat. The only useful information we found is that 9mn[.]lat was registered on 2023-12-23 and will expire on 2024-12-23. Actually, 70 of the 104 domains are registered within one year and the expiration dates are also within one year. We posit that blocking these low-profile new domains should have trivial business impact on enterprise networks.

It seemed like they were studying specifically DNS rather than actively looking up the IoCs, so they did not come across the disclaimer. This is nice validation though that our binary behaved realistically enough to trigger production security systems.

Acknowledgements

This project has been in the works since mid-2023, and I’m very grateful to many people who made it possible. First, I’d like to thank my advisor Fabian Monrose, whose intuition for the right research questions greatly guided the direction of this work. I’d also like to thank Tillson Galloway and Omar Alrawi, whose expertise in TI research and network security was invaluable throughout this project.